Artificial Intelligence is by no means a new concept. Ever since the first computers came to be, humans have been trying to make them perform human tasks, firstly, arguably, out of curiosity. For instance, chess playing programs have been around since at least the seventies, when the first World Computer Chess Championships took place. As they grew stronger, naturally, there arose a desire to have them finally beat the best humans, which finally happened in 1997 when Deep Blue won a game of 6 against Kasparov, the reigning world champion. Besides the interesting world of chess programming, other forms of AI have entered the mainstream without any fuss of them being AI — a nearest path finder that considers traffic, found in every modern GPS; a robot that cleans rooms, without it being mapped in any form.

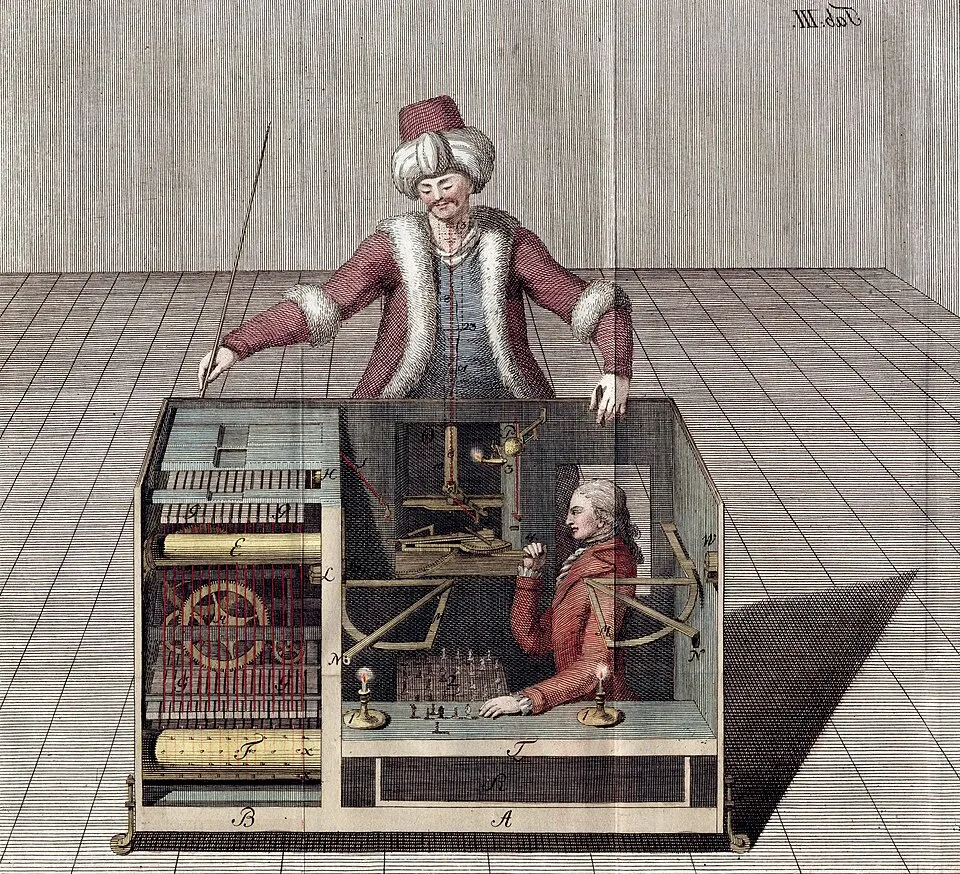

The Turk — a chess playing machine in the 1770s whose pieces were in reality moved by a master underneath. Apparently made to impress some empress but ended up fooling the general public.

The Turk — a chess playing machine in the 1770s whose pieces were in reality moved by a master underneath. Apparently made to impress some empress but ended up fooling the general public.

The forms of AI just described tend to be designated symbolic artificial intelligence, or simply classical AI. Those systems are intelligent in that they optimize some sort of specific goal based on a set of predetermined rules. For example, a path finder searches through a graph-like space and yields the tentative from point A to B that was in the end quicker. It is a domain-specific algorithm, producing highly deterministic results, that are considered correct and optimal in their domain. For chess, the concept of correct is relative, but a classical engine will yield the same moves for the same position, according to some configured way of evaluating a board and prioritizing move ordering.

The in vogue AI artifact is, on the contrary, highly unpredictable, operated through an imprecise communication mechanism — natural language —, but is taking the world by a storm. Generative AI automates a lot of boring stuff quickly and yields content that looks reasonable. In an agentic form, it can even take action and do work for us, even if it is not really that intelligent1.

In Software Engineering

In the Software Engineering field, there is a movement towards writing increasingly less code in the day to day, focusing on “designing things” instead of “getting lost in implementation details”. This may translate in effectively switching our way of working from solving interesting problems with optimized, human-crafted code grounded on sensible abstractions — tools that we have sat and thought through, based on all our experience, taste and vision that is, for the better or worse, unexplainable through words only —, to building endless documents of specifications, having them read by an agent, iterate with it until the output looks reasonable, and ship.

At a first glance, this may lead to a considerable decay in software quality. However, with sufficient care, thorough testing, it is indeed possible to build a reliable system out of these mechanisms; at least until something breaks slightly in production, nobody knows what could be the cause, because the ownership that arises from past experience is mostly gone, the LLM is lost because the issue was never seen before2, and hundreds get lost in despair.

“Coding is like taking a lump of clay and slowly working it into the thing you want it to become. It is this process, and your intimacy with the medium and the materials you’re shaping, that teaches you about what you’re making – its qualities, tolerances, and limits – even as you make it. […] When you skip the process of creation you trade the thing you could have learned to make for the simulacrum of the thing you thought you wanted to make. Being handed a baked and glazed artefact that approximates what you thought you wanted to make removes the very human element of discovery and learning that’s at the heart of any authentic practice of creation.” – Aran Balkan on Mastodon

Let’s not get lost in radicalisms here. Having a tool that autocompletes fast and is a good web searcher is very fitting for a software engineering role, a reasonable productivity booster with possibly negligible negative consequences3, if used mostly for that. Having it automate building itself might, I am afraid, yield a culture of mediocrity, stagnation and disconnect, at least for anyone that enjoys what they studied 5+ years for — and counting, since learning is a never ending process. LLMs are not just “the next compiler”, or a “new technology”; when abused, they make work look like sloppy requirements engineering instead of proper application of computer science.

In education and society

Most of the generative AI hype is arguably fueled, firstly, by extreme capitalist desires — produce more, faster, with less people —, but secondly, and perhaps more importantly, by the very human desire of minimizing suffering. It’s a first biological imperative, even if, counterproductively, it’s perhaps the fulfilling sensation of doing something good out of our genuine efforts and learnings what makes us happier in the long run.

The general problem here is that AI is not automating only the boring tasks — and struggling to do it for the really boring ones, such as house chores — but disincentivizing the very process of learning and being curious about the world. Why should a marketing and finance student be worried about how to craft great presentations, with useful insights, if in their future thay are expecting an agent to do just that well enough for the board to be satisfied? Students shortcut more than ever, and the ones who are still interested in learning get hired 50% less. Who will be the passionate ones in the future that continue to innovate?

The foreseeable future

There are many people that share the same concerns as I do, or even others such as the terrible impact this all has on data privacy and authorship rights. Others are more relaxed, either because they are focused on the short term benefits, which are evident, or genuinely believe some of the hype will slow down, as AI companies eventually stop burning energy — perhaps equivalent to the consumption of a fourth of all US households in 2028 — and billions of dollars, as the technology hits some plateau, speculation ends, and prices rise.

There is also an escape in case some form of real intelligence continues to get closer: good politics and regulations, although choosing to believe in that is as always a leap of faith. I am not opposed to a future where we all produce art and research and remaining world resources are well managed by optimized high-tech machinery. However, having just read through that makes it feel just like a fever dream.

Footnotes

-

Though not the subject of this post, and a deeply subjective one, I could sum up my opinion on this as that for anyone to be intelligent they need to be able to generate new knowledge, through motivated exploration, and have enough empathy to determine whether there’s any point in pursuing it in the first place, i.e. whether it will do any good to anyone. Let’s not get to sentience, in that regard. ↩

-

The effectiveness of an agent is deeply dependent on how it was trained in the subject in hands. It may trigger a web search, but for it to grasp a concept of what to search and what really is the issue, it must have read through billions of words on that and built some semantic representation of it: an embedding. ↩

-

Although sifting through outdated, confusing documentation and online discussions makes you more of an expert in the end, because you dit it yourself. ↩